Wie es sich für einen agilen Produktentwicklungsprozess gehört, hat sich selbiger auch bei der atrify über die letzten Jahre stetig weiterentwickelt. In meinem heutigen Blogpost möchte ich ein wenig über eben diesen Prozess erzählen und wie dieser von unseren ersten Gehversuchen im agilen Bereich bis hin zu unserem heutigen, kundenzentrierten Produktzyklus gereift ist.

Im Wesentlichen beeinflussen zwei große Bereiche den Inhalt unserer Releases: Der Input aus unserem strategischen Portfolio-Management sowie das was wir als Product Owner über die Community und die aktive Nutzerschaft der Applikationen als Ideen & Feedback generieren.

Feedback is the Key – quantitativ und qualitativ!

Wir wissen nicht, was unsere Nutzer möchten. Zumindest nicht solange, bis wir sie danach fragen. Und genau hierin investieren wir mittlerweile einen ganzen Batzen Aufwand.

Wir wissen nicht, was unsere Nutzer möchten. Zumindest nicht solange, bis wir sie danach fragen. Und genau hierin investieren wir mittlerweile einen ganzen Batzen Aufwand.

Regelmäßige Umfragen an unsere Kunden liefern uns ein quantitatives Feedback zu deren Zufriedenheit und fehlenden Features. Dies erfolgt in der Regel in Form von Interviewbögen, die sowohl Antworten auf Basis einer Skala zulassen, als auch offen formulierten Fragen nach Features, die beispielsweise am stärksten vermisst werden.

Seit ungefähr zwei Jahren messen wir zum einen den Net Promoter Score und führen zum anderen einen System Usability Test durch. Der Net Promoter Score ist vereinfacht formuliert eine Weiterempfehlungsquote, deren Wert es idealerweise mit jeder neuen Umfrageerhebung zu steigern gilt. Der System Usability Test erfolgt in Form eines genormten Fragebogens, um ein quantitatives Feedback zur Gebrauchstauglichkeit der Software zu erhalten.

Weiteres wertvolles quantitatives Feedback erlaubt uns die Datenerhebung in Form von Business Analytics oder auch Feature Messung über Google Analytics. Hier sehen wir genau, wie oft bestimmte Features in den Applikationen genutzt werden oder können uns über Abbrüche in der User Journey informieren.

So erhalten wir zum einen wertvolle Informationen zur Verbesserung bestimmter Abläufe & Features, wissen aber auch welche Features nicht gut ankommen und können die Software ggf vereinfachen und entschlacken. Das freut die Benutzer, da sie eine aufgeräumte Oberfläche mit lediglich den sinnvollen Features bietet und die Entwickler, weil die Software weniger komplex wird.

Einfachheit — die Kunst, die Menge nicht getaner Arbeit zu maximieren — ist essenziell

Basierend auf den oben genannten Umfragen kommt es immer wieder dazu, dass wir auf vereinzeltes Feedback in Form von User Interviews reagieren und den persönlichen Kontakt zu einzelnen Nutzern suchen.

Zumeist erfolgen diese User Interviews auf Basis eines negativen Feedbacks. Hier lohnt es sich oftmals bei den betroffenen Nutzern nachzuforschen, was ursächlich für das negative Feedback war und wie die Situation ggf verbessert werden kann. Ganz oft ergeben sich hier Quick Wins, die sich schnell und einfach umsetzen lassen, um eine Verbesserung herbeizuführen.

We Listen

Als 100%ige Tochter der GS1 Germany arbeiten wir auch mit GS1 Organisationen überall auf der Welt zusammen. Für diese betreiben wir unsere Applikation in deren Ländern und unter deren Schirmherrschaft als Service. In diesem Fall fungieren diese GS1 Organisationen als Multiplikator in ihre Märkte. Sie kennen den Handel und die Industrie, sowie die Prozesse und Erwartungen dort am besten.

Als 100%ige Tochter der GS1 Germany arbeiten wir auch mit GS1 Organisationen überall auf der Welt zusammen. Für diese betreiben wir unsere Applikation in deren Ländern und unter deren Schirmherrschaft als Service. In diesem Fall fungieren diese GS1 Organisationen als Multiplikator in ihre Märkte. Sie kennen den Handel und die Industrie, sowie die Prozesse und Erwartungen dort am besten.

Grund genug, diesen Schlüsselkunden ein eigenes Format zu widmen, welches wir mit “We listen” betitelt haben. Hier haben diese Kunden die Möglichkeit, sich mit uns über die Aktivitäten in Ihren Märkten auszutauschen und uns Änderungsbedarf und Feature-Wünsche mitzuteilen. Nicht selten ergeben sich hier auch Gemeinsamkeiten über die verschiedenen Organisationen hinweg.

Aber nicht nur der direkte, sondern auch der indirekte Draht zum Kunden liefert wichtige Erkenntnisse. Daher arbeiten wir ebenfalls eng mit dem Vertrieb und dem potentiellen Kunden auf der einen, als auch mit dem Support und den aktiven Kunden auf der anderen Seite zusammen. Gerade der Support, der das Ohr am nächsten beim Kunden hat und zumeist genau weiß wie dieser arbeitet und wie er die Applikation verwendet, ist unabdingbar, wenn es darum geht, Problemfelder in der Anwendung unserer Software zu entdecken und zu clustern.

Strategisches Portfolio-Management

Jeder der sich einmal mit Dr. Klaus Leopolds Flight-Level-Modell beschäftigt hat, wird wissen wie wichtig ein strategisches Portfolio-Management ist und das agile Arbeitsweisen sich auch in den oberen Etagen eines Unternehmens etablieren müssen.

Bereits dort müssen Priorisierungen für Initiativen, Features und Projekte besprochen werden und ein ganzheitliches Verständnis für die in Arbeit befindlichen Dinge geschaffen werden.

Ziele, die durch Features in den Applikationen unterstützt werden, oder die auch größere und ganzheitliche Anpassungen der Software erfordern sind genau so ein Input aus diesem Board wie bezahlte Kundenanpassungen oder größere Initiativen, die mehrere Produkte auf einmal betreffen können.

Vor-Priorisierung der Produktideen

Wie man sich sicherlich gut vorstellen kann, ergeben sie gerade aus dem ersten Bereich mit seinen mannigfaltigen Erhebungsmethoden eine Menge Ideen und Features. Damit wir als Product Owner die Zeit auf die wichtigsten Dinge fürs Produkt verwenden können und keine Zeit auf die Diskussion von Features verwenden, die es in nächster Zeit sowieso nicht ins Produkt schaffen werden, priorisieren wir diese Ideen im ersten Schritt grob vor.

An dieser Stelle hilft uns eine sogenannte MoSCoW-Matrix. Diese gestaltet sich tatsächlich sehr einfach und bietet vier Quadranten mit der Einteilung Must, Should, Could und Won’t. Während die Sektion Must die absolut essentiellen Dinge fürs Produkt darstellt sind unter Should wertvolle Features notiert, die es ebenfalls wert sind umgesetzt zu werden, aber nicht zwingend mit dem nächsten oder übernächsten Release, da sie beispielsweise keine Ablehnung beim Nutzer erzeugen, wenn sie nicht umgesetzt werden.

Dann gibt es noch die Sektion Could, welche alles sammelt was Sinn macht, wenn Zeit und Ressourcen übrig sein sollten, sowie die Sektion Won’t. Und zu guter Letzt ist sicherlich die Frage berechtigt, warum man überhaupt Won’t-Sektion an die Skala hängt, wenn man die Dinge unterhalb dieser sowieso nicht plant umzusetzen. Bei uns dient dies aber der Visualisierung, das eben diese Themen nicht als sinnvoll erachtet werden, gerade auch weil das Board regelmäßig gereviewed wird und diese Themen dann zumindest allen bekannt sind oder nochmals diskutiert werden können.

Scaling@Atrify

Auch bei uns arbeiten mehrere agile Teams in verschiedenen Bereichen. Zum Teil aufgeteilt nach Produkten, aber gerne auch einmal wechselnd, wenn es große Themen oder Initiativen gibt, die umgesetzt werden müssen. Aktuelles Beispiel hierfür sind beispielsweise die Umstellung der Software von einer Oracle-Persistenz auf PostGreSQL. Oder eine große Datenqualitäts-Initiative unserer Muttergesellschaft.

Schaut man sich das in der Visualisierung an, erinnert das Ganze ein wenig an Less. Wir haben sogesehen einen Chief-PO, der sich sowohl in tagtäglichen Dailys mit den POs für die einzelnen Teams abstimmt, als auch zweiwöchentlich in ausführlicherer Runde.

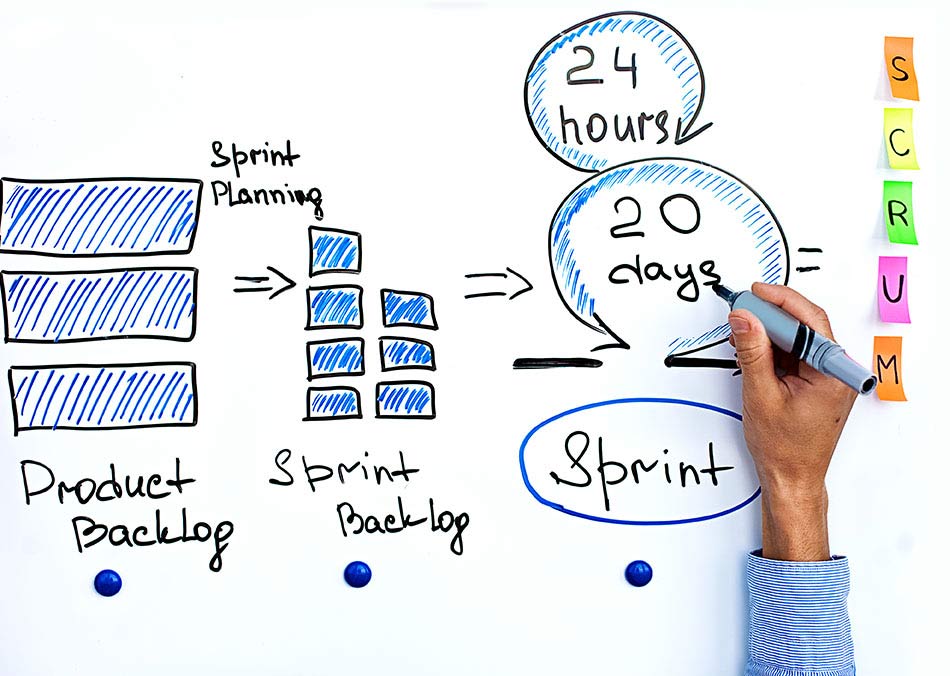

Darunter arbeiten unsere Teams in der Regel nach reinem Scrum, sprich sie haben ihre Daily Scrums, Review-Meetings, Refinements und Plannings.

Die Review-Meetings finden zum Teil team-bezogen statt, es kommt aber auch gerne einmal vor, dass mehrere Teams innerhalb eines gemeinsamen Review-Termins ihre Ergebnisse präsentieren. Die Termine für die Reviews hängen wir an einem entsprechenden Board in unserer Mitarbeiter-Lounge aus, damit jeder, der möchte teilnehmen kann. Da unsere Besprechungsräume durchaus Kapazitätsgrenzen haben, ist auf den Post-Its der Code für die Video-Konferenz direkt inklusive.

Deliver often

Wir liefern mittlerweile alle sechs Wochen ein Release aus. Das macht insgesamt ca 8 Releases im Jahr aus, das sind doppelt so viele wie in der Vergangenheit. Änderungen am Attributmodell, Codelisten oder Validierungen gehen sogar noch häufiger und oftmals On-Demand raus.

Denn gestartet sind wir damals mit einem Quarterly Release, und damals war jede Auslieferung gefühlt ein Abenteuer. Zum Teil tiefgreifende Code-Änderungen aus mehr als zwölf Wochen, verschiedene zu Grunde liegende Technologien betreffend gepaart mit Anpassungen am Datenmodell waren stets eine Herausforderung für den Rollout.

Die Anpassungen die wir jetzt innerhalb von sechs Wochen machen sind hingegen zumeist überschaubar. Die häufigere Deployment-Frequenz sorgt außerdem dafür, dass das Deployment zur Routine statt zur Challenge wird.

Liefere funktionierende Software regelmäßig innerhalb weniger Wochen oder Monate und bevorzuge dabei die kürzere Zeitspanne

Die sechs Wochen haben sich als gute Best-Practise für die Delivery erwiesen. Noch kürzere Release-Zyklen würden wenig Sinn machen, da wir oftmals Features haben, die sich innerhalb eines oder gar zwei Sprints nicht vollständig abschließen lassen. Mit den sechs Wochen scheinen wir des Weiteren in prominenter Gesellschaft, denn z.B. Google mit dem Chrome Browser oder Mozilla mit Firefox liefern in gleicher Häufigkeit.

Entwicklung & Releasezyklen

Grundsätzlich entwickeln wir drei Sprints â zwei Wochen an einem Release, gehen dann in eine zweiwöchige Codefreeze-Phase innerhalb derer das Release noch einmal gründlich geprüft wird, bevor die Kunden ebenfalls eine zweiwöchige Testphase erhalten. Erst danach geht das Release mit den neuen Features in Produktion.

Vorab-Release-Notes sowie eine finale Version des Dokuments (es ergeben sich durchaus auch nochmal Last-Minute-Änderungen, die dann explizit noch einmal nachgetestet werden) halten die Kunden auf dem Laufenden.

Heiße Anforderungsänderungen selbst spät in der Entwicklung willkommen. Agile Prozesse nutzen Veränderungen zum Wettbewerbsvorteil des Kunden

Alle zwei Releases wird in der Regel das ganze Unternehmen zusammengetrommelt und es gibt ein Product-Launch Termin. Hier werden die neuen Features in den Software-Releases vor größerem Publikum durch die Product Owner vorgestellt. Gerne auch live auf den Systemen und mit Unterstützung marketing-reifer Folien! Das hilft jedem im Unternehmen, auf dem aktuellen Stand zu bleiben, was die Weiterentwicklung der Produkte angeht und hilft insbesondere auch dem Support sich auf die neuen Features und potentielle Nachfragen der Kunden vorzubereiten.

That’s all, folks

Das soll es auch erst einmal gewesen sein mit unserem Software-Entwicklungsprozess. Irgendwann habe ich auch einmal begonnen, den Prozess als solchen einmal zu visualisieren…

Das soll es auch erst einmal gewesen sein mit unserem Software-Entwicklungsprozess. Irgendwann habe ich auch einmal begonnen, den Prozess als solchen einmal zu visualisieren…

Natürlich wird auch dieser sich immer weiterentwickeln, mein Kopf ist hierzu schon voller Ideen. Insbesondere Treiber wie Testautomatisierung und die zukünftige Umgestaltung unseres Produktmanagements werden hierauf wieder Einfluss haben.

Aber auch der regelmäßige Austausch mit einer starken, agilen Kölner Community bietet immer wieder Anregungen und fördert neue Ideen zu Tage.

Über den Autor

Daniel Haupt ist zertifizierter Product Owner bei atrify und verantwortlich für die agile Produktentwicklung von Lösungen für Industrie und Handel. Er lernt stets gerne neue Ansätze und Methoden im agilen Umfeld kennen und mag Wasserfälle nur in der freien Natur.